According to a recent study by the Bundesarbeitgeberverband der Prsonaldienstleister e.V., almost half of recruiting agencies utilize artificial intelligence (AI) as part of the recruiting process. However, the companies using the services have major gaps in their knowledge which need to be rectified: Around 80 percent do not yet use AI. Only roughly one-fifth of companies and approximately one-quarter of recruiters trust AI decisions.

EU AI Act aims to strengthen trust

The EU commission intends to overcome the skepticism among companies and consumers regarding AI and to strengthen the competitiveness of the European economy with the EU AI Act. As previously reported, Brussels is pursuing a risk-oriented approach: Is there a low, high or unacceptable risk of an application to human health, safety, and fundamental rights? In future, high-risk AI utilized in healthcare or transport, for example, will be subject to strict obligations. The same essentially applies to AI systems used in areas such as employment, human resource management, as well as access to self-employment, as AI can have a true impact on career chances and livelihoods. This legislation will affect both providers that develop or market AI systems for people analytics or recruiting, for example, as well as users such as companies employing smart software for HR management.

Strict regulations for high-risk applications

High-risk AI systems will be subject to extensive requirements: These will include risk management systems spanning the entire life-cycle of the AI together with transparency requirements to adequately inform users about the results and how these are used. The training models will require training, validation and testing datasets that fulfill the quality criteria specified by the EU AI Act. Companies will also have to make information available to users and comply with the specifications governing technical documentation and recording of events. Moreover, requirements regarding the accuracy, robustness and cybersecurity of the systems will also be defined. Before an AI is launched, it will have to undergo both conformity testing as well as CE certification.

Broad definition of AI covers many applications already in use

The new requirements affect almost all algorithmic decision-making and recommendation systems as the draft AI Act uses a very broad definition of artificial intelligence. As a consequence, many HR applications already in use also fall within this scope. Accordingly, one of the major criticisms of the draft AI Act is the very broad definition of AI as well as an overly broad concept of high-risk applications, according to the German AI Association. Instead, these regulations should only cover systems which may pose a potentially high risk to security or fundamental rights. Currently, the EU Parliament and EU Council continue to discuss changes to the definition of AI and, therefore, the actual scope of the regulation.

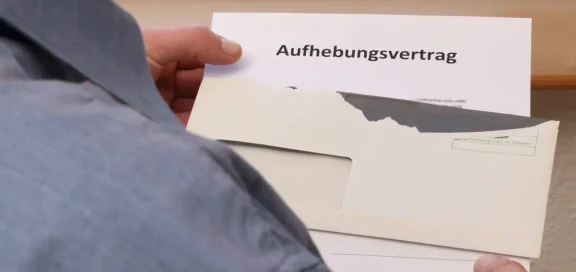

New EU liability rules for AI

Which due diligence requirements will apply to which systems in the future? The EU Commission’s changes to liability regulations make this issue all the more relevant for companies: The Product Liability Directive will be updated to address tech products and, in future, it will also cover inadequate IT security, for example. In addition, the Commission published the draft AI Liability Directive at the end of September. This aims to modify the rules governing non-contractual and fault-based civil claims for damages against AI. In the future, the causation of damage by an artificially intelligent system (causality) is to be presumed under certain circumstances.: The Commission assumes that it will be very difficult, if not impossible, for an injured party to prove that an AI caused the harm by violating a legal obligation. The reasoning is based on the lack of opacity due to the fact that decisions made by AI systems are not fully comprehensible. The defendant may refute this presumption by providing proof that the damage had another cause, for example.

The presumption of causality is also accompanied by easier access to relevant evidence for injured parties. Upon request, courts shall decide on whether to disclose information regarding high-risk AI systems in order to identify responsible individuals or failures. Nevertheless, business secrets and sensitive information should remain protected wherever possible.

The liability claims can be directed against both providers and users of an AI. For example, this could involve a company which uses AI in recruiting purposes.

HR needs to take action

According to a study by Bitkom, the IT industry association, only nine percent of companies use artificial intelligence. This is because 79 percent have concerns about IT security risks and 61 percent fear data protection violations. Potential application errors when using AI are a deterrent for 59 percent. However, these risks are preventable and HR, in particular, stands to benefit from a diverse range of opportunities: such as by optimizing job ads to precisely address the preferred target group and enable applicants to find them easily. Chatbots can remain available around the clock to enable initial contact with candidates. Furthermore, AI can also suggest additional careers along with suitable training or activities based on an employee’s profile. However, successful implementation requires extensive preparation: Only companies which automate “good” processes can also achieve improvements. In contrast, AI will not improve poor processes but only accelerate them, at best. In addition, the quality of the training data is crucial for success, because algorithms always involve discrimination risks.

Avoid flying blind

HR managers can minimize the risks and benefit from the opportunities by working closely with other departments such as IT, Data Management, and Legal & Compliance and also by proceeding step-by-step.

- Create transparency

Questions to be analyzed include: Which AI applications does the company utilize? Do they meet requirements for trustworthy systems? As already reported, the checklist for trustworthy AI from the High Level Expert Group on AI can be used for this purpose. Furthermore, the KIDD research project from the German Federal Ministry of Labor and Social Affairs provides suggestions regarding transparent and legally secure work with AI. Other questions requiring analysis include: Which data is used? Which data do we need and with which quality? Which data protection regulations apply? Which rules apply regarding IT security? To what extent does the works council have to be involved? - Alignment with the future requirements of the EU AI Act and the new EU rules for product liability as well as AI liability

- Implement risk management the entire life-cycle of a high-risk AI

The risk management should focus on aspects such as:- Are the transparency and documentation requirements fulfilled? Which processes ensure that these are updated to address new requirements and technical developments?

- Monitoring potential future risks, such as when machine-learning software changes how it functions

- Are AI incidents detected quickly and is it possible to rapidly respond to any damage?

- Designate responsible persons, describe processes and anchor these in the organization

- Regular system tests and risk plans

- Do humans remain the final authority when decisions are made?

- Contract check-up: To what extent can liability be limited? Which provisions can help to safeguard against claims concerning AI software suppliers and service providers?

- Draw up the company’s own Code of Conduct

As already reported, preparing a code of conduct can help companies to counteract skepticism regarding AI. Such codes of conduct can be oriented on the “Guidelines of the HR Tech Ethics Advisory Board for the Responsible Use of Artificial Intelligence and Other Digital Technologies in Human Resources”, for example.

Extensive amendments to both the EU AI Act as well as EU AI Liability Directive can be expected in the course of the further legislative process. Companies and HR managers need to keep an eye on the continuing developments to take any necessary steps in good time. According to a benchmarking study carried out by Personalmagazin together with the University of Mannheim and the Rhine-Main University of Applied Sciences, HR managers regard digitization as a major factor when it comes to better achieving a company’s HR objectives. For 83 percent, HR drives the change. Accordingly, HR also will also play an important role in overcoming skepticism regarding the use of artificial intelligence at the company. One way of doing so is when HR managers create transparency and demonstrate how risks can be minimized. Most importantly and above all, the issue requires a thorough analysis of training data and learning mechanisms.